Research and advocacy of progressive and pragmatic policy ideas.

Flattening the Curve of Online ‘Fake News’ in Malaysia

The need for pre-emptive measures to slow the spread of misinformation.

By Khairil Ahmad & Jia Vern Tham13 May 2021

At the time of this writing, Klang Valley is into the fifth day of its third MCO since the COVID-19 pandemic broke out last year. Due to the incredible number of daily cases, many of us had anticipated the MCO announcement, despite our hopes for a ‘new normal’ Raya.

Apart from the appalling case numbers, the anticipation of an MCO was also partially fueled by rumours of its announcement swirling through social media. The subsequent response by the authorities however, is emblematic of our incomplete approach to misinformation today.

In response to the earlier rumours, the government through the Ministry of Communication and Multimedia’s Quick Response Team (QRT) issued a statement to brand it as ‘fake news’. Together with the statement was a reminder to the people not to share unverified news which can cause public confusion and anxiety. This response encapsulates the long-standing approach to information disorder in Malaysia: fact-checking (when needed), followed by press statements denying a message’s authenticity together with public reminders and warnings.

This approach is not wrong per se, but is it sufficient? The received wisdom has been that people will be dissuaded from sharing a post if it is checked and confirmed as false. However, a recent study by Pennycook et.al. suggests otherwise; flagged content does not always influence people’s intention to share. People may continue to share such information as a result of “inattention” – they are distracted by other factors such as the desire to attract their followers.

Confirming the truth or falsity of a post or message is, we argue, insufficient. The reminders and warnings to users also fail to take into account the psychology of sharers; we instead assume people’s bad intent from the outset which of course leads to our overwhelming reliance on punitive measures.

‘Fake news’ can threaten public safety but as we have argued in a related recent article, there needs to be more effective and innovative ways of managing misinformation. The brutal fact is that ‘fake news’ and misinformation have been with us from time immemorial but its mode of proliferation today means that our initiatives (and mindset) need to be updated.

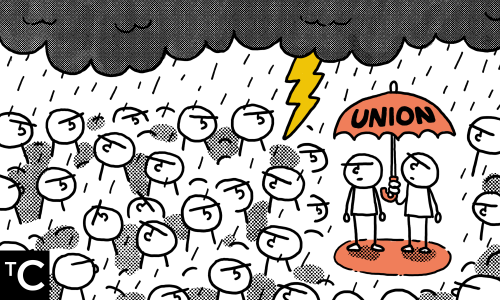

Initiatives to deal with fake news should be aimed at pre-empting their spread via intervention at the point of sharing. This can be done by “nudging” social media users to think about the accuracy of the news that they are about to share. Nudging is the act of gently steering people to make informed choices without having to prescribe the “truth” to them.

A number of social media platforms, including Facebook, Twitter, and TikTok, are already applying their own versions of nudging. TikTok, which engaged the services of behavioural scientists inspired by Pennycook et.al’s study, has seen a significant reduction in the sharing of flagged content.

We believe that a collaboration between the government and social media platforms to step up such nudges – with local language for example – would be a step in the right direction. Last month, the COVID-19 Immunisation Task Force (CITF) started working with Facebook to remind people to look beyond the headlines and to check for accuracy of the information they are about to share; this is a good start but it needs to be inserted at the right points of content consumption in order to reduce unthinking or reflexive sharing.

Nudging can also alleviate the reliance on fact-checking, which can be slow and laborious. With nudging, the need to establish the “truth” of the information each time is circumvented and we can rely instead on slowing down unproductive sharing behaviour. Pennycook et.al.’s study shows that when people are prompted to consider the accuracy of the news items that they are about to share, the spread of misinformation can be reduced.

Rather than relying on falsifying ‘fake news’, it is possible to get ahead of misinformation through nudging. This way, we can help reduce the need for punitive measures and at the same time protect our right to (responsible) free speech and media consumption. The question is, are we heading down the approach of innovative interventions, or are we continuing to rely on the same way of handling misinformation?

The Centre is a centrist think tank driven by research and advocacy of progressive and pragmatic policy ideas. We are a not-for-profit and a mostly remote working organisation.